The differences between core switches and ordinary switches

Nov 19, 2024

Leave a message

Many friends have asked about the differences between core switches and ordinary switches. Today, let's explore this topic together.

Data center-grade switches are characterized by high-quality business assurance and control recognition capabilities. They feature end-to-end flow control and backpressure mechanisms, ensuring stable and reliable data transmission, and smoothing out network surges. They offer higher reliability and security, simpler network configuration, and faster business deployment.

1. What is a Data Center Core Switch?

A core switch is not a type of switch but rather a switch placed in the core layer (the backbone of the network). Core switches are typically purchased by large enterprises and internet cafes to achieve powerful network expansion capabilities, preserving existing investments. Core switches are necessary when the number of computers reaches a certain threshold, usually more than 50. For networks with fewer than 50 computers, a router is sufficient. The term "core switch" is context-dependent in network architecture. For a small LAN with a few computers, an 8-port switch can be considered a core switch. In the networking industry, core switches refer to Layer 2 or Layer 3 switches with management functions and powerful throughput. For networks with over 100 computers, a core switch is essential for stable and high-speed performance.

2. Differences Between Core Switches and Ordinary Switches

2.1 Port Differences

Ordinary ethernet switches typically have 24-48 ports, mostly Gigabit or Fast Ethernet ports, primarily used for user data access or aggregating data from access-layer switches. These switches can be configured with simple VLAN routing protocols and basic SNMP functions, but they have relatively low backplane bandwidth.

Core switches typically feature a higher number of ports, often in a modular design, enabling flexible combinations of optical and Gigabit Ethernet ports. Core switches are typically Layer 3 devices, capable of configuring routing protocols, ACLs, QoS, load balancing, and other advanced network protocols. The key difference is that core switches offer significantly higher backplane bandwidth and typically include redundant engine modules with primary and backup configurations.

2.2 Differences in User Connection or Access to the Network

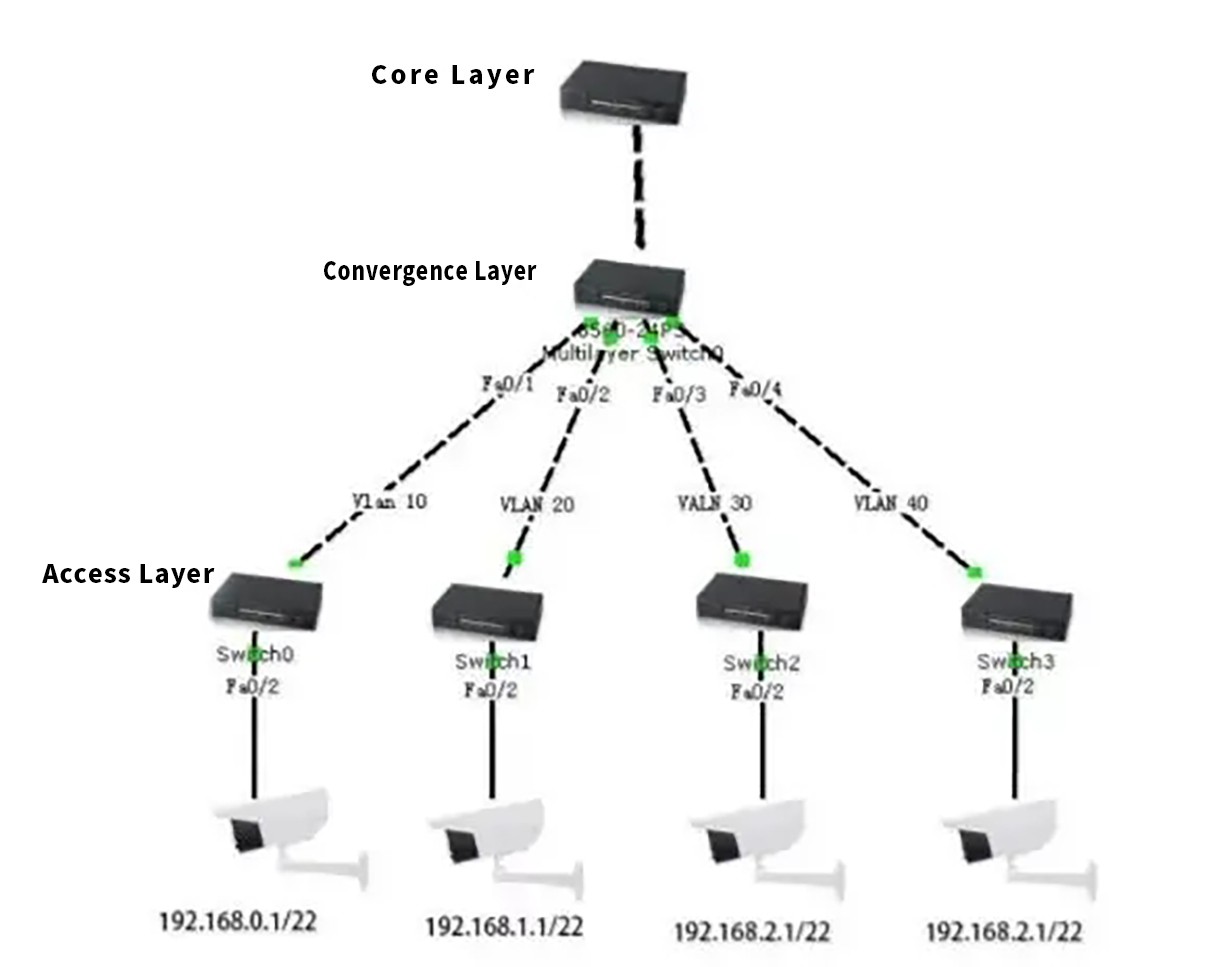

The part of the network directly facing user connections or access is called the access layer. The section between the access layer and the core layer is called the distribution or aggregation layer. The purpose of the access layer is to allow end users to connect to the network, so access-layer switches are characterized by low cost and high port density. Aggregation-layer switches are the convergence points for multiple access-layer switches. They must handle all traffic from access-layer devices and provide upstream links to the core layer. Therefore, aggregation-layer switches have higher performance, fewer interfaces, and higher switching speeds.

The backbone of the network is known as the core layer. The main purpose of the core layer is to provide optimized, reliable backbone transmission structures through high-speed forwarding of communications. Core-layer switches therefore have higher reliability, performance, and throughput.

Ethernet switches in core layer,convergence layer and access layer.

In contrast to ordinary switches, core switches must possess the following attributes: large buffer, high capacity, virtualization, scalability, and module redundancy.

2.3 Large Buffer Technology

Data center switches modify the traditional switch system's output port buffering method by adopting a distributed buffer architecture. Their buffer capacity is much larger than that of ordinary switches, reaching over 1G, while ordinary switches typically only have 2-4M. Core switches can manage burst traffic of up to 200 milliseconds per port at full 10Gbps speed, ensuring zero packet loss during traffic spikes, making them ideal for data centers with high server density and burst traffic.

2.4 High-Capacity Devices

Data center traffic features high-density application scheduling and surge-style burst buffering. Ordinary switches, designed primarily for interconnection, cannot achieve precise business recognition and control, nor can they respond quickly and ensure zero packet loss under heavy business loads, compromising business continuity. System reliability mainly depends on device reliability.

Consequently, ordinary switches fall short in meeting data center requirements. Data center switches must offer high-capacity forwarding and support high-density 10Gbps cards, like 48-port 10Gbps cards. To ensure full-speed forwarding of 48-port 10Gbps cards, data center switches must use the CLOS distributed switching architecture. Additionally, with the proliferation of 40G and 100G, 8-port 40G cards and 4-port 100G cards are gradually being commercialized. Data center switches with 40G and 100G cards have already entered the market, meeting the high-density application needs of data centers.

2.5 Virtualization Technology

Data center network devices need to have high manageability and security reliability, so data center switches must also support virtualization. Virtualization transforms physical resources into logically manageable resources, breaking down barriers between physical structures. Network device virtualization includes multi-to-one, one-to-many, and multi-to-multi technologies.

Virtualization enables the uniform management of multiple network devices and complete isolation of business on a single device. This can cut data center management costs by 40% and boost IT utilization by around 25%.

2.6 Scalability

Scalability should include two aspects:

a) Slot Number:

Slots are for installing various functional and interface modules. Since each interface module provides a certain number of ports, the number of slots fundamentally determines the number of ports a switch can accommodate. Additionally, all functional modules (such as super engine modules, IP voice modules, extended service modules, network monitoring modules, security service modules, etc.) need to occupy a slot, so the number of slots also fundamentally determines the switch's scalability.

b) Module Types:

The greater the variety of supported modules (e.g., LAN, WAN, ATM, extended function modules), the greater the switch's scalability. For example, LAN interface modules should include RJ-45 modules, GBIC modules, SFP modules, 10Gbps modules, etc., to meet the needs of complex environments and network applications in large and medium-sized networks.

2.7 Module Redundancy

Redundancy ensures network security and operation. No vendor can guarantee that its products will not fail during operation. The ability to quickly switch when a failure occurs depends on the device's redundancy. For core switches, important components should have redundancy capabilities, such as redundant management modules and power supplies, to ensure network stability to the greatest extent.

2.8 Routing Redundancy

Utilizing HSRP and VRRP protocols ensures load sharing and hot backup for core devices. When a core switch or one of the dual aggregation switches fails, the Layer 3 routing device and virtual gateway can quickly switch, achieving redundant backup of dual lines and ensuring overall network stability.

3. Summary

To summarize, core switches can be characterized by these 16 points:

1. High backplane bandwidth for faster data forwarding.

2. Flexible networking suitable for large and medium-sized network access layers.

3. Flexible port options based on network applications, such as SFP, GE, Fast Ethernet, and Ethernet ports.

4. Support for VLAN segmentation enables users to partition the network into different zones based on applications, effectively controlling and managing the network, and further mitigating broadcast storms.

5. Managed switches with high data throughput, low packet loss, and low latency.

6. Control of data information flows based on source, destination, and network segment.

7. Link aggregation allows switches and servers to be bonded through multiple Ethernet ports for load balancing.

8. ARP protection to reduce network ARP spoofing.

9. MAC address binding.

10. Port mirroring to copy traffic and status from one port to another for monitoring.

11. Support for DHCP.

12. Access control lists to control IP data packets, such as limiting traffic, access, and providing QoS.

13. Better security features, such as MAC address filtering, locking, and static MAC forwarding tables.

14. Support for IEEE 802.1Q and VLANs based on port technology, including GVRP and GMRP.

15. SNMP functionality for better network management and control.

16. Easy expansion and flexible application, manageable through network management software or remote access control, increasing network security and controllability.