How to design a data center:13 Key Points

Apr 08, 2026

Leave a message

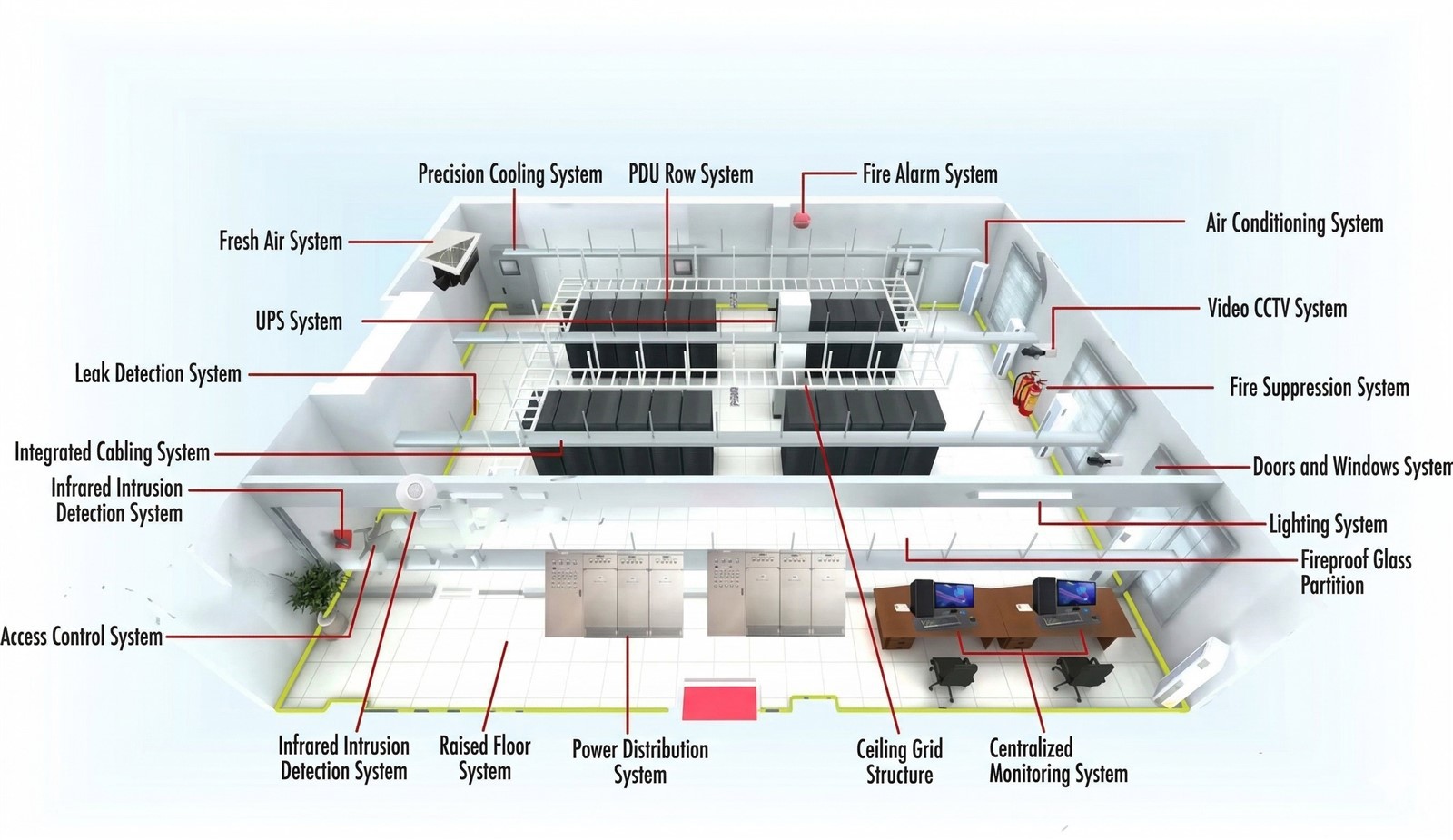

As everyone knows, a comprehensive data center building project generally includes: network cabling, anti-static flooring installation, ceiling and wall finishing, partition installation, UPS systems, specialized precision air conditioning, data center environmental monitoring, fresh air systems, leak detection, grounding systems, lightning protection, access control, surveillance, fire suppression, alarms, and shielding engineering.

How to design a data center? Do you know what are the key points in the data center designing and building process? Let's break them down.

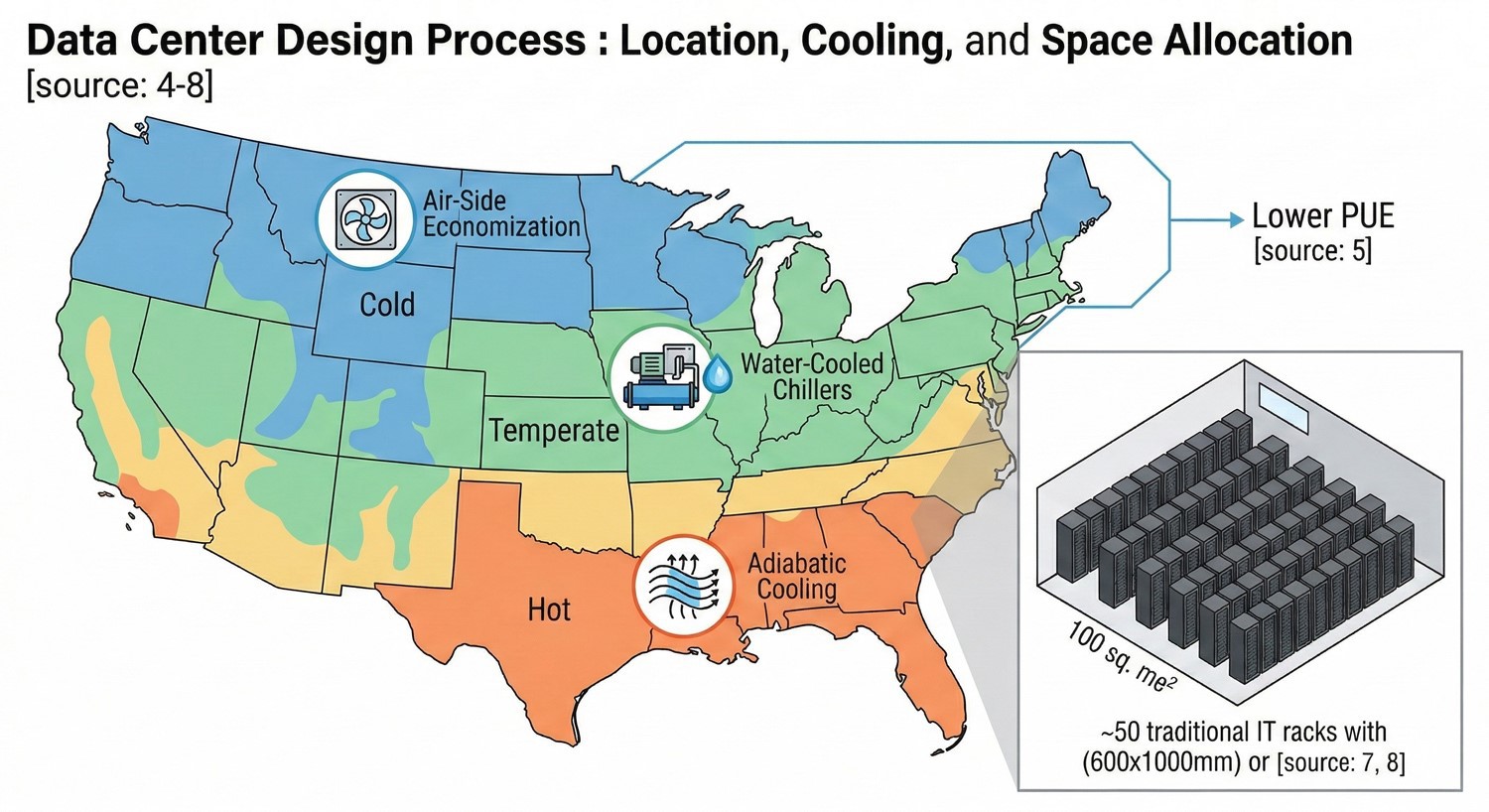

I. Where will the data center be located?

II. How many racks are needed, and what are their dimensions?

The number of racks determines the data center's space requirements. A traditional IT rack measures 600x1000mm (width x depth). A 100-square-meter room can accommodate approximately 50 such racks. Of course, racks come in other sizes. Knowing the rack dimensions and quantity allows for a straightforward estimate of the required space.

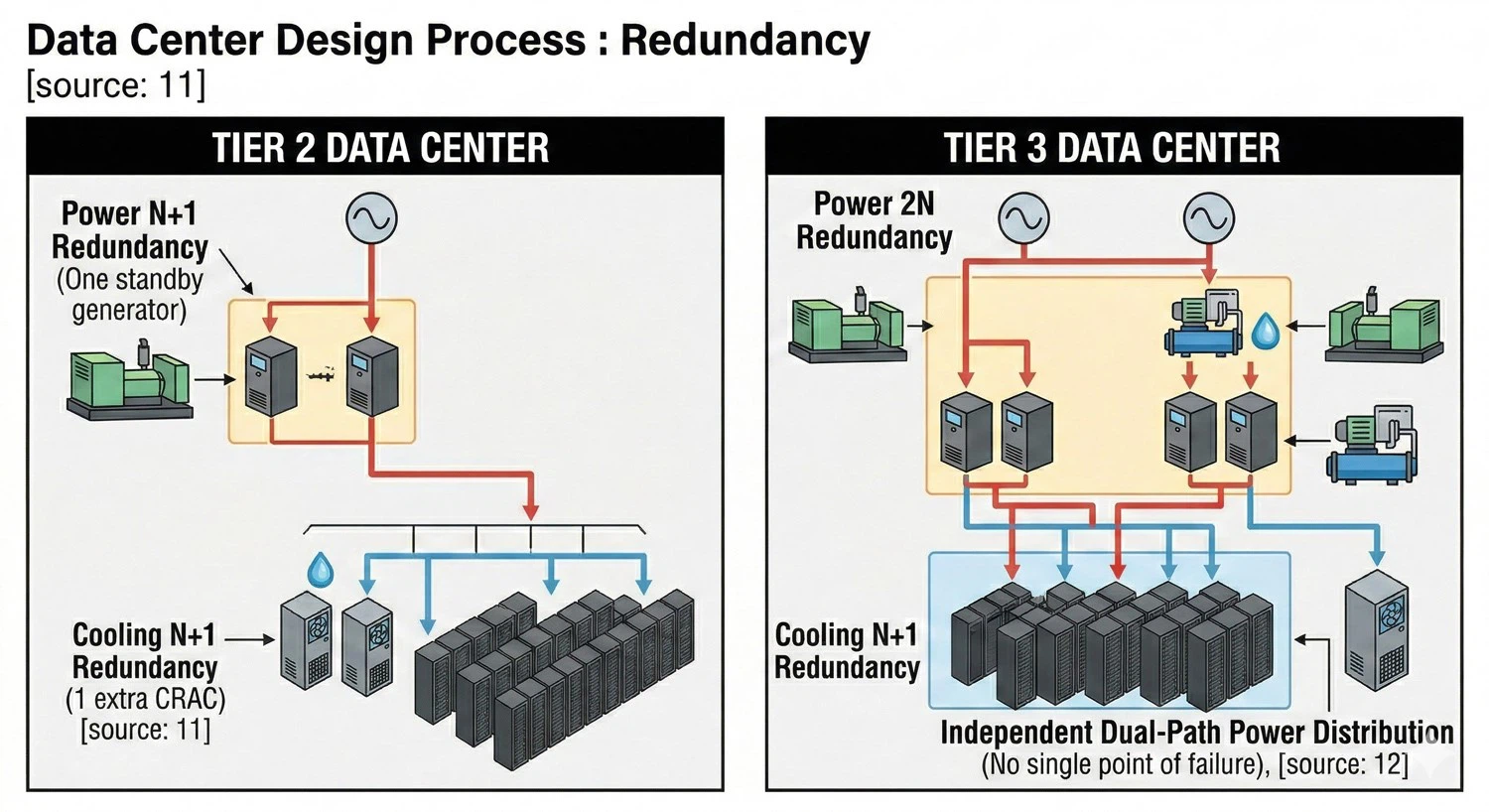

III. What Tier level is required for the data center?

IV. What is the average power density per rack?

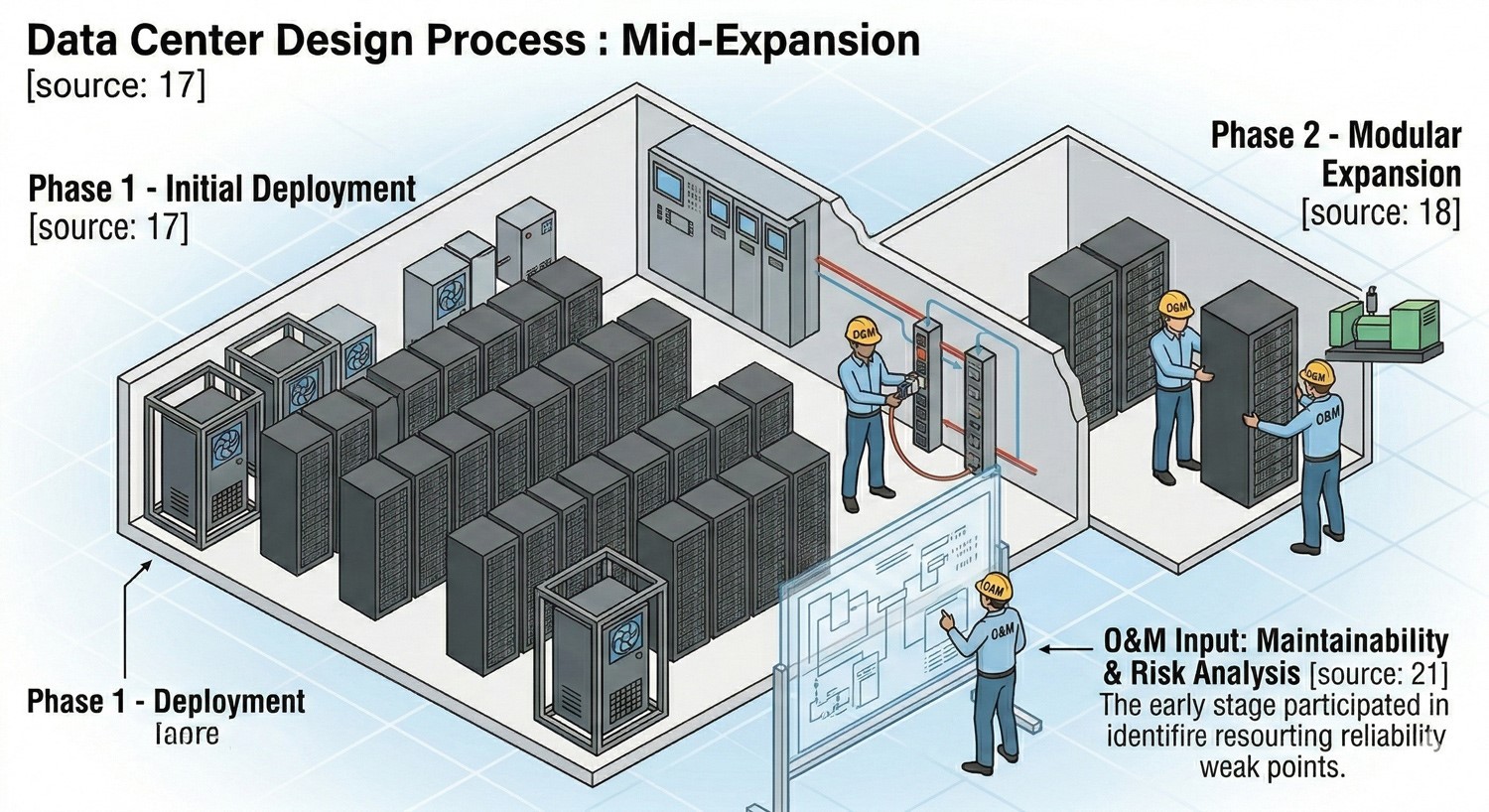

V. Should operations and maintenance personnel participate in planning and design?

Absolutely. Generally, the following should be achieved:

a. Involving O&M in early-stage planning compensates for designers' potential gaps in system operational knowledge, improves design quality, and avoids or eliminates design flaws.

b. Involving O&M ensures that operational-phase requirements are fully considered during planning.

c. Involving O&M allows personnel to thoroughly understand the system's structure, reliability weak points, legacy issues, and potential risks, thereby improving O&M quality and enabling well-founded maintenance and upgrade plans.

VI. Avoid being influenced by internal and external factors

For issues stemming from an inability to distinguish between tendencies, preferences, limitations, and constraints-and from not adhering to scientific design principles-the following advice is offered:

a. Avoid letting individual decision-makers during approvals and decision-making cut or adjust key functions based on personal opinions, resulting in a delivered data center that fails to meet operational and maintenance needs.

b. Avoid actions driven by bias, preference, or vested interests. During planning, some vendors may influence design development and equipment selection by exaggerating performance or using misleading terminology.

VII. What level of backup batteries is needed for AC or DC racks?

Server racks may require 100% DC power, 100% AC power, or a combination. For example, a data center built for colocation might need an AC (UPS) power system, while a telecommunications facility may require a DC power system. Knowing this determines the required size and scale of the DC or UPS system. When deploying backup batteries, a configuration based on a 15-minute discharge time is recommended. This approach does not significantly increase capital expenditure and is more cost-effective, though the rationale can seem counterintuitive. Companies should focus on improving backup generator redundancy rather than wasting funds on excessive battery capacity.

VIII. Avoid undervaluing planning/design and overemphasizing construction

The industry suffers from undervaluing planning/design and overemphasizing construction, mainly seen in:

a. Constructing the building shell first and planning the data center afterward, creating significant design difficulties.

b. The widespread phenomenon of renovations beginning immediately after server room construction and equipment installation.

c. Selecting equipment before finalizing the design, leading to equipment replacement before operation because the purchased gear doesn't meet design requirements or site conditions.

d. Building structures that fail to meet data center layout needs, resulting in poor room zoning; air conditioning outdoor units that cannot be installed or are too distant; and excessive distance between the power room and the main computer room, increasing complexity, cost, and reducing reliability.

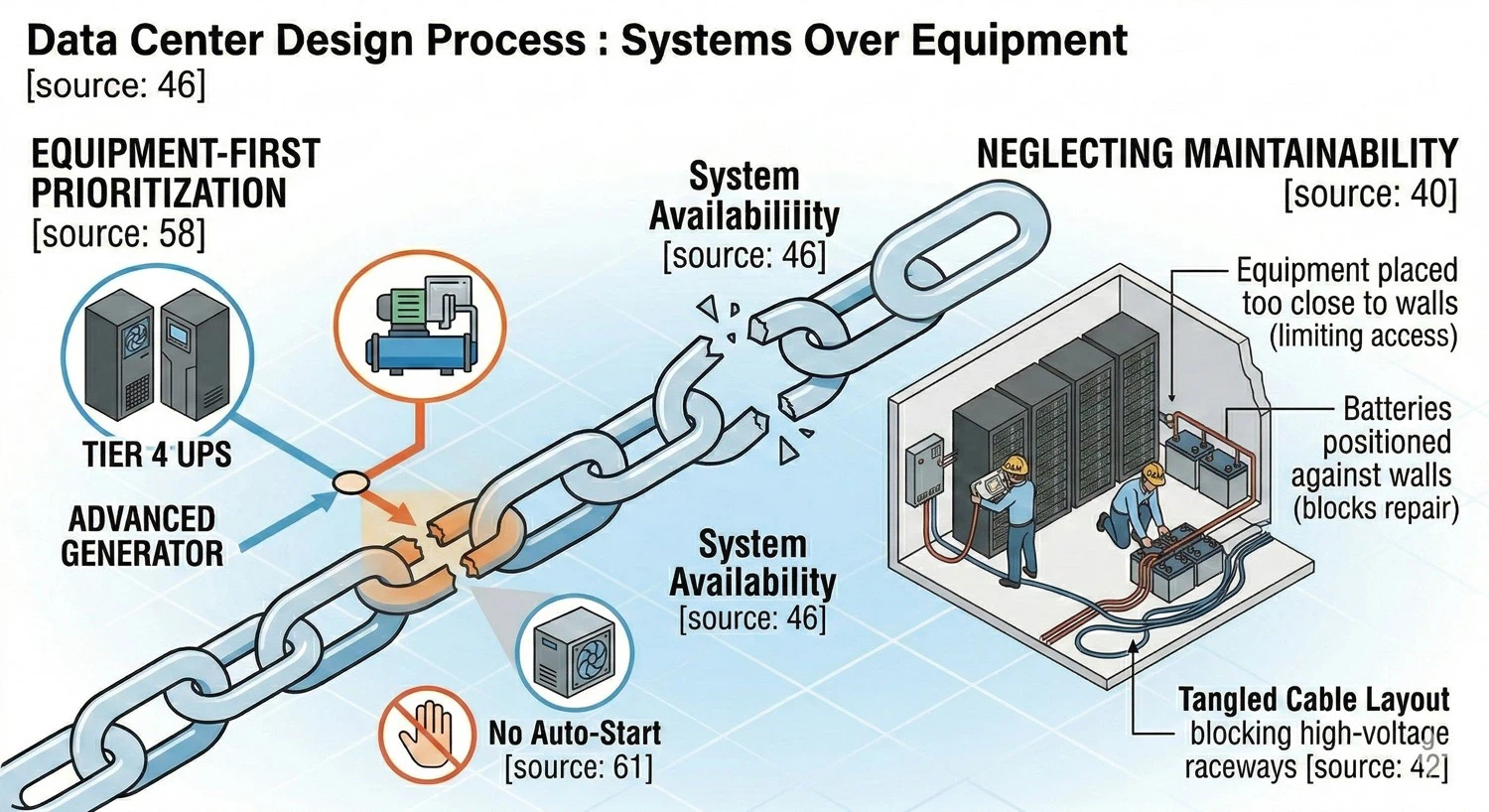

IX. Avoid neglecting system maintainability and repairability in design

a. Failing to consider future maintenance access and space during planning-e.g., equipment placed too close to walls, batteries against walls, poor cable layout, conduits or cable trays blocking overhead low-voltage raceways hindering repair, and inadequate room for tools.

b. During failures, emergency supplies and spares cannot be moved quickly, and there's no workspace for replacing faulty components, delaying resolution and potentially causing major incidents.

c. Not considering the system's redundancy when maintaining equipment after a failure.

d. Not maximizing automation to reduce manual intervention, thereby lowering the uncertainty and uncontrollability inherent in manual procedures.

X. Avoid availability design lacking a scientific basis

System availability is the paramount metric in data center planning, but designs often lack scientific grounding, evident in:

a. While reliability calculations are performed for various systems during planning, design institutes and individual designers currently lack unified methodologies and data sources, leading to differing definitions and results for the same data center's design tier and reliability.

b. Cases exist where planning and construction proceed first, and the design tier is reverse-engineered after completion, then promoted to users based on this derived standard. This is a classic case of putting the cart before the horse; often, a few key flaws degrade the tier rating even though most of the design meets the requirements.

c. Focusing only on the availability of individual devices or subsystems while ignoring how interdependencies affect overall system availability.

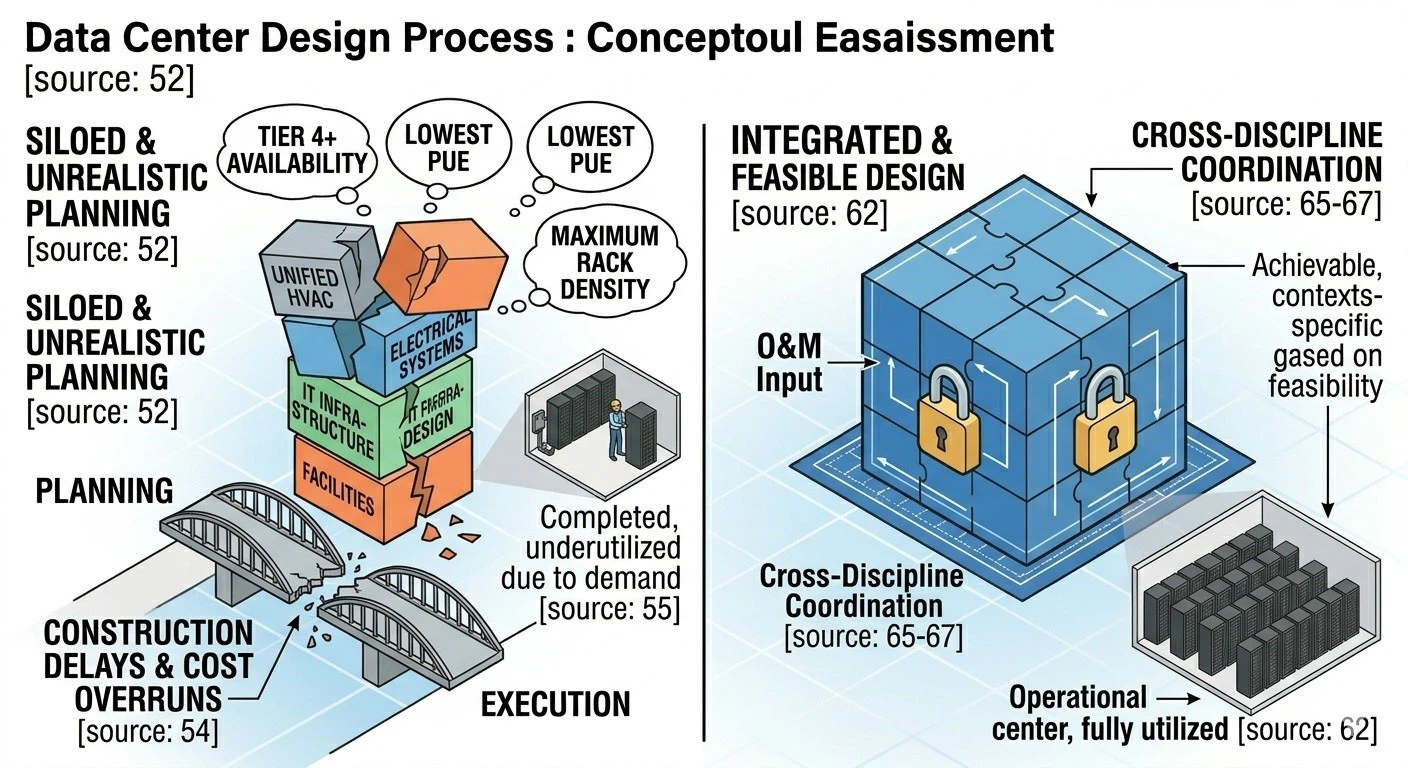

XI. Avoid setting high targets divorced from actual needs and feasibility

During initial planning, subjectively setting ambitious data center targets-unrealistically pursuing large scale, high availability tiers, high rack power density, and low PUE - is problematic. When detailed design follows without rigorous analysis per planning principles, the specific plans and measures misalign with the overall vision. The results are:

a. Unclear actual needs and lack of feasible prerequisites lead to repeated design changes, wasting cost and significantly extending the timeline.

b. Completed and operational rooms are underutilized due to lack of anticipated demand or because room conditions don't meet user needs, requiring further retrofit.

c. Planned functionalities go unrealized: system availability falls short, the cooling solution doesn't support the intended rack density, generators don't support continuous operation, or over-design keeps the PUE stubbornly high.

XII. Avoid the misconception of prioritizing equipment over systems

a. Selecting equipment specifications, models, or even manufacturers first, then adapting the design accordingly.

b. Designing the power system for 2N redundancy (the highest availability tier) but only achieving it for the UPS, leaving single-point failures in the overall distribution path.

c. Designing the entire system as a top-tier redundant/fault-tolerant system but powering the cooling equipment via a single path.

d. Providing an AC backup diesel generator without automatic start capability, reflecting a lack of understanding that continuous cooling is essential for continuous system operation.

XIII. Emphasize integrated design and improve system integration capability

This is crucial for high-quality planning and design.

a. Many problems arise during construction because planning insufficiently considers phased, discipline-specific implementation and coordination between different trades. This results in delivered data centers failing to meet business and maintenance needs, sometimes requiring major investment to fix.

b. Designers often focus solely on their own scope, lacking a holistic view of how their work interfaces with other disciplines, leading to conflicts and gaps.

c. Planners may misjudge future business growth, giving insufficient thought to future capacity management and expansion.

d. Unfamiliarity with the surrounding resource and physical environment leads to designs with poor implementability or that create severe difficulties for later operations.

Summary

Many other issues deserve consideration in new data center building process. However, industry experience shows that mastering these 13 key points during designing and building process helps ensure the final outcome closely aligns with genuine user needs - a lesson worth learning from.